使用 ARM 模板部署群集

-

请注意,

Compute Node Image应为后缀的后缀HPC,并且Compute Node VM Size应Standard_H16r为Standard_H16mrH 系列 ,以便群集能够支持 RDMA。

运行 Intel MPI 基准 Pingpong

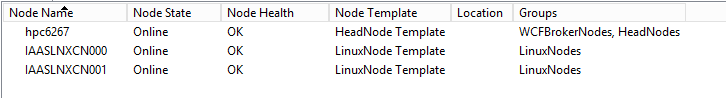

登录头节点 hpc6267 并联机获取节点

提交作业以在 Linux 计算节点之间运行 MPI Pingpong

job submit /numnodes:2 "source /opt/intel/impi/`ls /opt/intel/impi`/bin64/mpivars.sh && mpirun -env I_MPI_FABRICS=shm:dapl -env I_MPI_DAPL_PROVIDER=ofa-v2-ib0 -env I_MPI_DYNAMIC_CONNECTION=0 -env I_MPI_FALLBACK_DEVICE=0 -f $CCP_MPI_HOSTFILE -ppn 1 IMB-MPI1 pingpong | tail -n30"自动生成 MPI 任务的主机文件或计算机文件

可以在任务命令中使用环境变量

$CCP_MPI_HOSTFILE来获取文件名可以设置环境变量

$CCP_MPI_HOSTFILE_FORMAT以指定主机文件或计算机文件的格式默认主机文件格式如下所示:

nodename1 nodename2 … nodenameN当

$CCP_MPI_HOSTFILE_FORMAT=1,格式如下所示:nodename1:4 nodename2:4 … nodenameN:4当

$CCP_MPI_HOSTFILE_FORMAT=2,格式如下所示:nodename1 slots=4 nodename2 slots=4 … nodenameN slots=4当

$CCP_MPI_HOSTFILE_FORMAT=3,格式如下所示:nodename1 4 nodename2 4 … nodenameN 4

在 HPC Pack 2016 群集管理器中检查任务结果

运行 OpenFOAM 工作负荷

下载并安装 Intel MPI

Intel MPI 已安装在 Linux 映像

CentOS_7.4_HPC中,但生成 OpenFOAM 需要更新的版本,可以从 Intel MPI 库下载它使用 clusrun 下载并无提示安装 Intel MPI

clusrun /nodegroup:LinuxNodes /interleaved "wget https://registrationcenter-download.intel.com/akdlm/irc_nas/tec/13063/l_mpi_2018.3.222.tgz && tar -zxvf l_mpi_2018.3.222.tgz && sed -i -e 's/ACCEPT_EULA=decline/ACCEPT_EULA=accept/g' ./l_mpi_2018.3.222/silent.cfg && ./l_mpi_2018.3.222/install.sh --silent ./l_mpi_2018.3.222/silent.cfg"

下载并编译 OpenFOAM

可以从 OpenFOAM 下载页下载 OpenFOAM 包

在生成 OpenFOAM 之前,我们需要在

zlib-develLinux 计算节点(CentOS)上安装和Development Tools更改变量WM_MPLIB的值,从SYSTEMOPENMPIOpenFOAM 环境设置文件中bashrc更改为INTELMPI源 Intel MPI 环境设置文件和mpivars.shOpenFOAM 环境设置文件bashrc(可选)可以设置环境变量

WM_NCOMPPROCS来指定用于编译 OpenFoam 的处理器数,这可能会加速编译使用 clusrun 实现上述所有目标

clusrun /nodegroup:LinuxNodes /interleaved "yum install -y zlib-devel && yum groupinstall -y 'Development Tools' && wget https://sourceforge.net/projects/openfoamplus/files/v1806/ThirdParty-v1806.tgz && wget https://sourceforge.net/projects/openfoamplus/files/v1806/OpenFOAM-v1806.tgz && mkdir /opt/OpenFOAM && tar -xzf OpenFOAM-v1806.tgz -C /opt/OpenFOAM && tar -xzf ThirdParty-v1806.tgz -C /opt/OpenFOAM && cd /opt/OpenFOAM/OpenFOAM-v1806/ && sed -i -e 's/WM_MPLIB=SYSTEMOPENMPI/WM_MPLIB=INTELMPI/g' ./etc/bashrc && source /opt/intel/impi/2018.3.222/bin64/mpivars.sh && source ./etc/bashrc && export WM_NCOMPPROCS=$((`grep -c ^processor /proc/cpuinfo`-1)) && ./Allwmake"

在群集中创建共享

创建在头节点上命名

openfoam的文件夹,并将其EveryoneRead/Write与权限共享使用 clusrun 在 Linux 计算节点上创建目录

/openfoam并装载共享clusrun /nodegroup:LinuxNodes "mkdir /openfoam && mount -t cifs //hpc6267/openfoam /openfoam -o vers=2.1,username=hpcadmin,dir_mode=0777,file_mode=0777,password='********'"请记得在复制时替换上述代码中的用户名和密码。

准备用于运行 MPI 任务的环境设置文件

使用代码在共享中创建文件

settings.sh:#!/bin/bash # impi source /opt/intel/impi/2018.3.222/bin64/mpivars.sh export MPI_ROOT=$I_MPI_ROOT export I_MPI_FABRICS=shm:dapl export I_MPI_DAPL_PROVIDER=ofa-v2-ib0 export I_MPI_DYNAMIC_CONNECTION=0 # openfoam source /opt/OpenFOAM/OpenFOAM-v1806/etc/bashrc如果文件在头节点上编辑,则应注意行尾,而不是

\n\r\n

为 OpenFOAM 作业准备示例数据

将 OpenFOAM 教程目录中的示例

sloshingTank3D复制到共享openfoam(可选)修改

deltaTfrom0.050.5和 from0.05到0.5in/openfoam/sloshingTank3D/system/controlDict的值writeInterval以加速数据处理根据要使用的核心编号修改文件

/openfoam/sloshingTank3D/system/decomposeParDict,请参阅 OpenFOAM 用户指南:3.4 并行运行应用程序/*--------------------------------*- C++ -*----------------------------------*\ | ========= | | | \\ / F ield | OpenFOAM: The Open Source CFD Toolbox | | \\ / O peration | Version: v1806 | | \\ / A nd | Web: www.OpenFOAM.com | | \\/ M anipulation | | \*---------------------------------------------------------------------------*/ FoamFile { version 2.0; format ascii; class dictionary; object decomposeParDict; } // * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * // numberOfSubdomains 32; method hierarchical; coeffs { n (1 1 32); //delta 0.001; // default=0.001 //order xyz; // default=xzy } distributed no; roots ( ); // ************************************************************************* //在 . 中

/openfoam/sloshingTank3D准备示例数据 在 Linux 计算节点上手动执行以下代码时可以使用以下代码:cd /openfoam/sloshingTank3D source /openfoam/settings.sh source /home/hpcadmin/OpenFOAM/OpenFOAM-v1806/bin/tools/RunFunctions m4 ./system/blockMeshDict.m4 > ./system/blockMeshDict runApplication blockMesh cp ./0/alpha.water.orig ./0/alpha.water runApplication setFields提交作业以实现上述所有目标

set CORE_NUMBER=32 job submit "cp -r /opt/OpenFOAM/OpenFOAM-v1806/tutorials/multiphase/interFoam/laminar/sloshingTank3D /openfoam/ && sed -i 's/deltaT 0.05;/deltaT 0.5;/g' /openfoam/sloshingTank3D/system/controlDict && sed -i 's/writeInterval 0.05;/writeInterval 0.5;/g' /openfoam/sloshingTank3D/system/controlDict && sed -i 's/numberOfSubdomains 16;/numberOfSubdomains %CORE_NUMBER%;/g' /openfoam/sloshingTank3D/system/decomposeParDict && sed -i 's/n (4 2 2);/n (1 1 %CORE_NUMBER%);/g' /openfoam/sloshingTank3D/system/decomposeParDict && cd /openfoam/sloshingTank3D/ && m4 ./system/blockMeshDict.m4 > ./system/blockMeshDict && source /opt/OpenFOAM/OpenFOAM-v1806/bin/tools/RunFunctions && source /opt/OpenFOAM/OpenFOAM-v1806/etc/bashrc && runApplication blockMesh && cp ./0/alpha.water.orig ./0/alpha.water && runApplication setFields"

创建包含要处理日期的 MPI 任务的作业

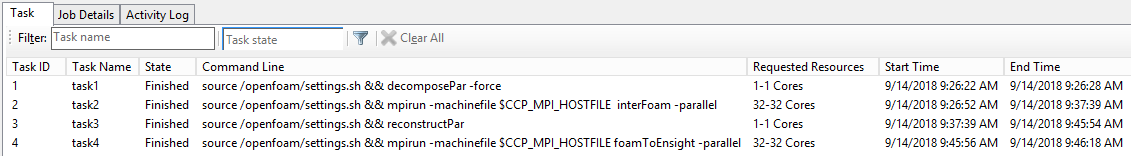

创建作业并添加 4 个依赖任务

任务名称 从属任务 核心 指令 环境变量 task1 无 1 source /openfoam/settings.sh && decomposePar -force 无 task2 task1 32 source /openfoam/settings.sh && mpirun -machinefile $CCP_MPI_HOSTFILE interFoam -parallel CCP_MPI_HOSTFILE_FORMAT=1 task3 task2 1 source /openfoam/settings.sh && reconstructPar 无 task4 task3 32 source /openfoam/settings.sh && mpirun -machinefile $CCP_MPI_HOSTFILE 泡沫ToEnsight -parallel CCP_MPI_HOSTFILE_FORMAT=1 将工作目录

/openfoam/sloshingTank3D设置为每个任务的标准输出${CCP_JOBID}.${CCP_TASKID}.log使用命令实现上述所有作:

set CORE_NUMBER=32 job new job add !! /workdir:/openfoam/sloshingTank3D /name:task1 /stdout:${CCP_JOBID}.${CCP_TASKID}.log "source /openfoam/settings.sh && decomposePar -force" job add !! /workdir:/openfoam/sloshingTank3D /name:task2 /stdout:${CCP_JOBID}.${CCP_TASKID}.log /depend:task1 /numcores:%CORE_NUMBER% /env:CCP_MPI_HOSTFILE_FORMAT=1 "source /openfoam/settings.sh && mpirun -machinefile $CCP_MPI_HOSTFILE interFoam -parallel" job add !! /workdir:/openfoam/sloshingTank3D /name:task3 /stdout:${CCP_JOBID}.${CCP_TASKID}.log /depend:task2 "source /openfoam/settings.sh && reconstructPar" job add !! /workdir:/openfoam/sloshingTank3D /name:task4 /stdout:${CCP_JOBID}.${CCP_TASKID}.log /depend:task3 /numcores:%CORE_NUMBER% /env:CCP_MPI_HOSTFILE_FORMAT=1 "source /openfoam/settings.sh && mpirun -machinefile $CCP_MPI_HOSTFILE foamToEnsight -parallel" job submit /id:!!

获取结果

在 HPC Pack 2016 群集管理器中检查作业结果

示例 sloshingTank3D 的结果生成为文件,该文件

\\hpc6267\openfoam\sloshingTank3D\EnSight\sloshingTank3D.case可由 Ensight 查看